One of the great mysteries of introductory programming education is what’s commonly known among computing education practitioners as the double hump.

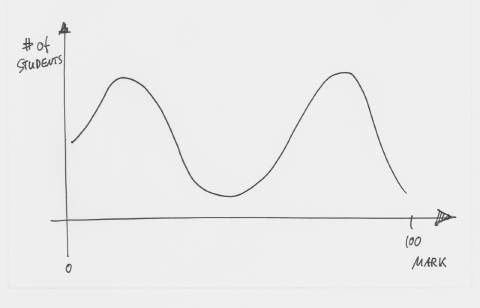

The double hump refers to the marks distribution in a typical introductory programming course. Here is an example:

Figure 1: The double hump distribution of marks in a programming course

This graph shows a typical distribution of final marks in a first programming course. We have a whole bunch of students getting very good marks and a lot of them failing. Mark Guzdial, in a blog post, calls it the 20% Rule:

“In every introduction to programming course, 20% of the students just get it effortlessly — you could lock them in a dimly lit closet with a reference manual, and they’d still figure out how to program. 20% of the class never seems to get it.”

The surprising thing about this distribution is how constant it seems to be. It has been observed in programming courses all over the world, largely independent of geographical or social context, and over a long period of time: The same pattern that we observe now existed 10 years ago, and 20 years ago, and 30 years ago.

(And an ironic side note is that we as teachers tend to react to this by aiming our teaching at the medium level—thus teaching to a group that contains hardly any students at all…)

So what is this telling us?

For programming teachers, this poses some interesting questions and challenges. One conclusion has been that the ability to understand programming is somehow intrinsic. That there are people who are just good at it, and others how simply cannot get it. This has led to a whole lot of research about programming ability predictors: ways in which we can predict, by looking at people’s prior activities, performance, social context, or any other aspect of their lives, or with a hopefully simple test, whether or not they will be successful in learning programming before we make them go through it.

Some people really believe in these predictors, some do not. I am generally in the second category. Let’s say, I am at least highly sceptical. The first paper linked above, for example, draws what I believe to be severely invalid conclusions. It interprets the data in a way that the data just does not support. But that’s a story for another day.

What I really want to talk about is an idea that I picked up from a fascinating seminar that Anthony Robins gave a few months ago in our department: That the cause of the high failure rate that we are observing might not be any kind of intrinsic capability, but caused by the sequential nature of the material we are teaching.

What is going on might be this: The material in programming courses is highly hierarchical. Every topic strongly builds on the topics previously covered. Thus, if you don’t understand one section of the course, you will likely also struggle in the following sections, unless you spend extra time to catch up and make up for what you missed before.

Therefore, programming courses are self-amplifying systems. If you fall behind a bit towards the beginning, for whatever reason, you are likely to fall more and more behind in the following weeks and months. If you get ahead early, you are likely to stay ahead, or even move further ahead. The short of it is that this dependancy of performance on prior performance in the course will automatically divide the class into two distinct groups whose performance drifts further apart as time goes on.

Voilá, the double hump is born.

Anthony, in his seminar, made some very interesting points that provided strong indicators that this might be what’s going on. He is, as far as I know, in the process of publishing these findings, so I won’t go into too much detail here. (I will update this post with a link once his paper is out.) We can leave discussion of whether we belive this or not for another day.

For today, I’ll simply state that I find it entirely plausible that this is at the root of the problem. So for now, I will work with the premise that the double hump is not caused by some intrinsic personal capability, but by the strong hierarchical nature of the material we teach.

The question that I asked myself then is: What should we do about it?

My conclusion is that we need to shift from quantity-oriented teaching to quality-oriented teaching. Here is what I mean.

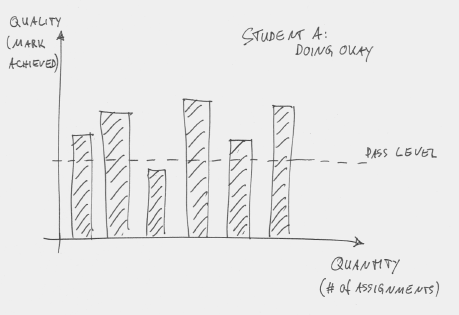

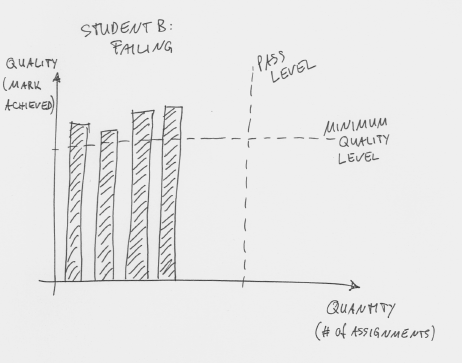

In a typical programming course at a university today, we may have a number of assessments. (For the purpose of this argument it does not matter what that number is—it might be a smaller number of large assessments or a larger number of small ones.) Students get marked on every assessment, and they pass the course if their average grade is above some defined pass level. Figure 2 shows a student in this scheme.

Figure 2: A good student attempts six assessments and passes most of them

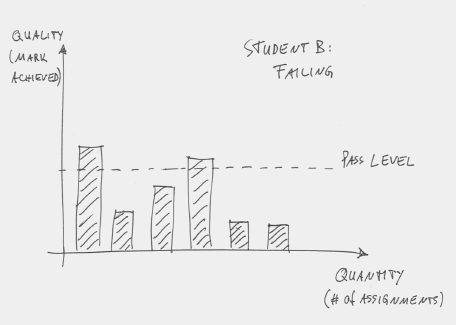

In this example, there are six assessments and the student is doing okay: The average mark is above the pass level. The pattern for a weak student might look like Figure 3: The student also attempts six assessments, but mostly fails.

Figure 3: A weak student attempts six assessments and mostly fails

And this is a problem. It is not only a problem that the student is failing, it is a failure to teach sensibly if we believe in the hierarchical nature of our material. Once the student severely fails Assessment 2, there is really no point to let him/her move on to Assessment 3. In fact, it’s ridiculous. We are essentially saying: “We have just established that you did not understand concept X, so now go on to study concept Y, which is harder and builds on concept X.”

Now, that’s just plainly stupid.

We have essentially demonstrated that you have no chance to move to something harder, and then make you move on to something harder. Without giving you a break to catch up. The problem is: This is exactly what we usually do.

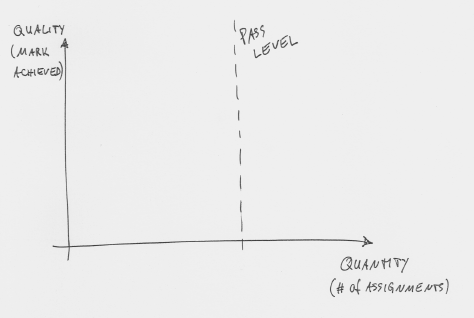

So what can we do about it? The solution might be to re-orient the pass line in our diagram (Figure 4).

Figure 4: Re-thinking the pass level

Typically, in our courses, the x-axis (number of assignment students do) is fixed, while the y-axis (quality of submissions) is variable. And we define success as reaching a given level of quality (see Figure 3).

We should turn that around. We should fix the y-axis and make the x-axis variable, thus re-defining success as successfully completing a certain number of assessments. This means that every student has to achieve a defined minimum level of quality in each assessment before they are allowed to move on to the next assessment. The differentiation between students then is not what quality level they have achieved, but how many stages of assessment they have managed to complete. In a diagram, it looks like this: Figure 5 shows a good student at the end of the course. Nine assessments have been completed, beyond the pass level of assessment 6.

Figure 5: A good student completing enough assessments to pass

The graph for a struggling student then looks like this (Figure 6).

Figure 6: A a struggling students failing to reach pass level

This student has not reached pass level and fails the course. Note, however, the difference here to our student in Figure 3: There are no assessments recorded below the minimum accepted quality level. (Every submission attempt with insufficient quality is simply judged as “not completed”.)

I don’t believe that this scheme will solve all our problems, but I do believe that it has a number of advantages:

- Students may have a higher chance of success. Instead of early failure leading almost automatically to a sequence of further failures, they have a chance to recover.

- Students who learn at different speeds can all survive.

- Especially good students can go even further than they did in the past, because we can allow them to move forward according to their own capabilities.

- More flexibility in dealing with temporary problems: If a student misses the first three weeks of class, be it because of illness or any other reason, they may still pass the class instead of having no chance.

- Even failing students learn something. Instead of failing to understand every topic (Figure 3), they can at least spend their time understanding a few topics (Figure 6).

There are a number of problems and challenges as well, of course. The obvious difficulty is that we must be able to allow different students to work on different material at the same time. Every student effectively progresses at their own personal pace, and the teaching must support this.

Is this organisationally possible? I think so.

It is not easy, and it requires us to severely change how we teach, but I think it’s worth it. It will require different form of instruction (away from big lectures to the whole class) and different form of assessment (away from giving everyone the same task and assessing it by just submitting source code). Otherwise there would be a problem with plagiarism. We might need to assess by interviewing students to actually test their understanding!

Does this create work? Sure. But I think it’s worth it. And I actually believe that, once the change is made, the workload for teachers will be comparable to what we have now.

In an ideal world, this way of learning would have the result that different students learn the same material in a different amount of time. In reality, we will not get away from fixed length courses for the time being.

This means, that—at the end of a course—different students will have studied different amounts of material. Some students who move on to the next course will have covered the advanced material, some have not.

There will be an objection from other teachers that we let students pass who have not seen all the material, and that they do not know some concepts that they should know.

While this is true, I don’t believe that this is any different from our current situation. Which teacher would really claim that all students who passed their course have understood all concepts they were teaching? If one of my current student just barely clears the pass mark, there is a lot that we have covered that they don’t know. Claiming that they know more material because I talked over their heads about more advanced concepts for longer is nonsense.

I would rather have a student who properly understands 50% of the concepts than a student who half understands (and half misunderstands!) everything.

You have just turned learning into a game :).

Minimum quality level: you either complete the (game) level or not. If you managed to get 100% collectibles or complete the level with some additional constraints, good for you, you get extra points.

Redefining pass level as number of assignements: there’s final level and if you completed it, you’ve beaten the game. If you are good and unlocked side quests or completed secret levels, good for you, you get extra poitns again.

I can understand that the initial hump is created by people who fall behind at an early stage. Failing to understand basics such as the mental model of a variable, or syntax, would lead you to be in the left-hand hump regardless of future material. But shouldn’t the sequential nature of the course mean that the hump should decline gradually towards the right-hand side (as people fail to learn further than a given stage), leaving you with a left-skewed bell curve, rather than a bimodal distribution? Interesting ideas though — reminds me a bit of this article: http://www.inference.phy.cam.ac.uk/mackay/exams.pdf

I think this is very interesting. The (I assume made-up) graphs don’t really support the idea of grade amplification however. Furthermore, a student missing the first 3 weeks of lectures will still struggle, unless we give everyone a private teacher.

However, I do think the idea is very interesting. In fact, it could be taken even further: a university/school could just offer assessments (instead of courses) that a student could complete when they feel like it. When a student wants to do a new assignment, he goes to the corresponding teacher, and gets the required instruction (maybe there should be instruction sessions at fixed intervals to reach more students at a time). This would allow everyone to learn at their own pace and could potentially lead to intense customization of one’s education. Care should be taken that someone doesn’t just pick a lot of ‘easy’ or ‘entry-level’ assignments. To keep with the gaming metaphor, ‘levels’ could be assigned to each assessment and higher levels should give more credit.

A lot of practical issues would need to be worked out, but I think it is interesting to think about.

Excellent ideas. I teach Continuing Education (US) part-time for (mostly) adults and have seen this in my basic Java course. Some folks just don’t get it at the start and never recover. Some drop after 1 or 2 sessions and some just plod on (there is no pass/fail requirement). I recently switched the order of topics to see if that helps but it still assumes a constant pace of absorption.

You’re forgetting about the “click effect” — when a student suddenly “gets it”, and goes from under-performing to better-than-average in an instant. Watching the virtual light bulb go on is a wonderful thing, partially because of its rarity.

In an introduction to programming course, an awful lot of material can be “made up’ very quickly, once that light goes on, as the concepts that were seen but not understood become obvious and intuitive. (Upper division courses aren’t nearly so kind.) Thus, credit for assignments should be of increasing weight.

Further, in teaching an introduction to programming course, I found that making the weekly assignment a motivational exercise (rather than a knowledge-acquisition check) worked much better with the lectures: I’d structure the assignment so that the clear and obvious approach, given their knowledge, was tedious and boring — and then, in the lecture after the assignment was due, I’d introduce the concept / feature that would let them push the tedious and boring tasks to the computer.

Constructing the assignments in this way was much harder than simply following some textbook’s layout and pacing for the course; the additional work paid off for the students in that the final curve was no longer a double-hump.

The proposed approach doesn’t really seem to lend itself, then, to the problem of an introduction to programming course; the problem is not that concepts build on one another, but that until the student groks that computers are boxes that do what you tell them, none of the concepts will make sense, and after, they’ll be obvious.

For later courses, however, the proposed approach seems brilliant.

My introductory programming course was at the University of Texas in 1989, and it was essentially structured the way you suggest. All assessments were conducted in a dedicated testing lab. The goal was to pass a certain number by the end of the term. When you passed one, you were eligible to take the next. I can’t comment on how successful it was for most students, but it worked well enough for me. Maybe someone has data on the distribution of completion “velocities” of students who took that course.

I fully disagree with the statement that the ability to program is not somehow intrinsic to a person. I have taken to programming like a fish to water. I only need to read the API or see an example on a topic and I can implement it and have a decent idea of when is the right time to use it. When I was just learning a few years ago, the syntax and structure just fell into place to the point where they were never a factor. The lightbulb was simply on. However, I recently spent 15 minutes trying to explain to my friend was a “loop” is for. I had to draw a diagram for him, complete with code block and arrow curling out the bottom into the top. He’s a moderately intelligent person, but he doesn’t have the intuitive and immediate grasp of logical structure that I believe is a precursor to being an excellent (and effortless) programmer.

Good blog. I had the same situation when I taught a sophomore level C++ algorithms course last year. After the mid-term resulted in a “double hump” I started asking the students on the low end what was going wrong. Turns out they were never required to write code or programs during their intro java classes (teachers taught language and gave them multiple choice exams). The better students had all been coding during high school.

During the spring semester I made changes. I strongly encouraged students without laptops to skip the $100 textbooks and buy a $275 netbook so they had their own tools to work with and avoid frustration with school computers. Then each period changed to 1/2 lecture on concepts, then 1/2 lab to actually code it up. The more advanced students were required to use GUI toolkits to run their programs and present results. The homeworks were changed to produce a continuing build up to a final project game. The results in the class were much better – every student was able to have some level of a running game for the final. the lower end students were able to progress and avoid frustration and discouragment which is the root of many of their failures.

Of course this is a lot of work for the professor. It is a shame that 50% of the professors are pathetic, unwilling to put in extra work, unqualified in newer techniques, and protected by tenure for life. It seems to take one of us low paid adjuncts to implement change, although I have reached the point of asking why should I be paid squat for compensating for the pathetic well paid tenured incompetents. So, like in much of America, the failures get propped up and the good people quit or are underemployed. In many universities, the students are being ripped off.

Actually, not necessarily. You could also solve this by moving students between classes. Imagine a single CS class has five different key lessons within it. You have a few lecturers teaching the class.

On day one, you evenly distribute all of the students across the lecturers. Everyone learns lesson one. The students who complete that lesson move into one pool, students who don’t go into another. Based on the size of those two pools, you distribute the lecturers between elaborating on lesson 1 and moving on to lesson 2. Then you move the students to the appropriate lecturer.

Repeat with every lesson.

“The obvious difficulty is that we must be able to allow different students to work on different material at the same time. Every student effectively progresses at their own personal pace, and the teaching must support this.”

In my opinion, this is the key ingredient of this approach, particularly the “teaching” part. How can we pull this off given an Intro to Programming course can have up to 50-60 students at the very least? Making the assignments’ due date extensible is simple, but how can we teach the students with different paces? Can we rely on TAs to provide supplement teaching to the students in the lower bracket? Would pairing exercises (the first 20% with the last 20%) work?

Would teach intro programming in an entire lab environment/recorded lectures with optional extra explanation work better that a lecture setting? Having the students self-study with notes and hand-on approach, allowing them to progress with the lecture slowly and to be able to request more basic explanations that a high-level student can just skim by?

Two things that come to mind when I read this:

1. I just started reading Outliers/Malcolm Gladwell and the first episode is about a similar phenomena in several athletic professions (as well as in education), and I was wondering if you’ve had a chance to read this and factor in his data and conclusions?

2. There is a manufacturing methodology which originates from lean manufacturing and the Toyota production system called kanban (a signal/pull based system which prescribes limitation of work in progress and removal of waste amongst other things). It sounds like an interesting option for a school system would be to treat it as a kanban system, with people being the workitems and the workflow is the stages of each course (need to think about how we limit WIP for each student as well), but it will allow you to find bottlenecks (where people get stuck), advance the better students faster, keep the slower students at the same stage until they are ready for the next stage of the flow, find out which stages/courses need more teachers etc…

If the sequential nature of the course were in the root of the double hump, it will happen also in other fields like, for example, foreing languages, medical, or maths. Have you checked that?

Here we usually place in the root of this phenomenon the sum of ‘easy matter’ with ‘two degrees of motivation’. Here we recommend to increase the contents of the course in order to center the upper bell arround 5 (or C in USA), and ignore the lower bell.

Aside note: Here we call the chart ‘histogram’, the shape ‘bimodal’, and that type of courses ‘cumulative matter’.

Very interesting idea.

This reminds me a great deal of how music students are graded. – Typically, they have a series of “pass-offs” which are sequentially harder to do and require more musical skill. The student’s grade is largely determined by how many “pass-offs” they get done. Too few and you fail, many of them and you get an ‘A’.

Wonder if I can try this is the CS classes I teach?

Funny, you’ve just described the approach I’ve used in my introductory programming course at the high school level for the past twelve years.

And you’re right; it works.

@Smokey

programming is effortless for you? Maybe find something harder to work on.

I have to say, the idea of needing to pass a test before proceeding appeals to me greatly (assuming subsequent tasks actually require previous knowledge). Video games really should be the current model that educators should be looking at. Good game makers look very hard at real game players playing a game, and will modify earlier parts of games to make it clear how to use some in game mechanic. And most game players can’t help but learn while they’re playing the game (ignoring the bias involved in that the player often wants to play the game).

Also, you should take a look at this paper (“the camel has two humps”) which has some experimental data that suggests why the double hump exists.

http://www.cs.mdx.ac.uk/research/PhDArea/saeed/paper1.pdf

These ideas are not new. This is the same logic behind “programmed instruction” and “personalized system of instruction”. Google it.

Pingback: The double hump of programming classes | Psychology of Programming

I think your ideas are interesting and worth pursuing further.

The notion that all students haven’t learned all the material is a popular one but flawed. I have some experience from taking courses (at university level) where some basic courses in statistics and maths were built around open-ended projects in small groups. The first thing that scared people by this methodology was that the groups covered different material. Would the students really have the same chance of making the next course?

My experience is that it is not a problem. You have to repeat earlier material anyway. It is a natural part of the learning process.

This is a really intriguing argument. I would say we at nTier Trainining would concur with the conclusions. We train professionally every week (Java, OO, Agile, Scrum, Spring, Java Server Faces and related courses) and often have the same “double hump” problem…in fact, it’s exacerbated by the fact that we’re teaching at an accelerated pace (our average course is 1-2 weeks in length).

One solution we’ve used to mitigate this problem is to build in very frequent reviews of key concepts DAILY. Other ideas to help include pair programming and peer reviews. I’m curious to hear more about other techniques to address this problem.

I agree with Neil Brown that if the hump model is due to the sequential nature of the class, we should instead see a left-skewed curve. Additionally, we should see this effect repeated in other sequential courses as well – calculus, physics, and within areas of other sciences as well. I’m hesitant to assign an effect like this to any singular cause – I expect there are many factors all colliding simultaneously to produce this, including natural talent AND sequentiality.

mmmm… Having completed many computer course myself – including a degree in Software Engineering I have to say that I disagree with you… I really wanted to learn how to program, especially in C++, (having spend years learning an out of date language) going to University, I thought, would increase my knowledge and I would leave Uni as a competent programmer – wrong!!!

All of my University experience, not one teacher ‘taught’ how to program properly, they only taught the basics, I ended up having a little knowledge in lots of different languages, but not really being competent (to a high level) in any of them. How dissapointing!!

I think the real problem here are the teachers themselves, my experience showed me that most teachers don’t have the enthusiasm needed to excite the students, or even the drive to help others who are struggling, if you’re behind – tough cookie.. Is that really teaching? I don’t think so.

As an after thought, over 100 students came on the course in yr1, in the final yr there were only 18 of us left? – now that speaks volumes.

Pingback: Project Blackbox – Better data for programming education research – mik's blog

Pingback: On the double hump… | Justin Robertson