By Katie Sambrook, Head of Special Collections

By Katie Sambrook, Head of Special Collections

The Foyle Special Collections Library has recently added the following item to its collections.

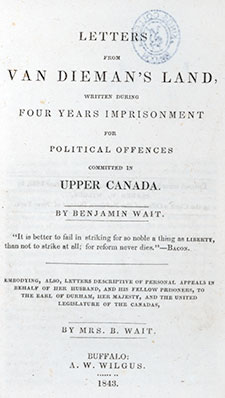

Benjamin Wait. Letters from Van Dieman’s Land. Buffalo: AW Wilgus, 1843 [Foyle Special Collections Library, Miscellaneous Collection HV8950.T3 WAI]

The frontispiece shown here depicts the author Benjamin Wait (1813-95) on board a convict ship.

Wait took an active part in the Upper Canadian revolt of 1837. He was captured by government forces and later sentenced to death for treason, but, following representations by his wife Maria, the sentence was commuted to transportation, and Wait was sent to the penal colony of Van Dieman’s Land (Tasmania).

Maria continued to campaign tirelessly for his freedom, but her efforts proved in the end to be unnecessary; Wait and a fellow Canadian patriot and convict, Samuel Chandler, succeeded in escaping from Tasmania on board an American whaling ship and reached the United States in 1842.

Sadly, Maria died shortly after the reunion with her husband. Wait never returned to Canada, spending the rest of his life in the USA.

Wait’s Letters provide a lively and detailed account of his part in the Upper Canadian revolt and subsequent exile in Tasmania.

The book also includes a number of Maria’s letters, documenting her efforts to secure her husband’s pardon.